README.md

| 1 | --- |

| 2 | library_name: transformers |

| 3 | license: apache-2.0 |

| 4 | license_link: https://huggingface.co/Qwen/Qwen3.6-35B-A3B/blob/main/LICENSE |

| 5 | pipeline_tag: image-text-to-text |

| 6 | --- |

| 7 | |

| 8 | # Qwen3.6-35B-A3B |

| 9 | |

| 10 | <img width="400px" src="https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3.6/logo.png"> |

| 11 | |

| 12 | [](https://chat.qwen.ai) |

| 13 | |

| 14 | > [!Note] |

| 15 | > This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format. |

| 16 | > |

| 17 | > These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc. |

| 18 | |

| 19 | Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience. |

| 20 | |

| 21 | ## Qwen3.6 Highlights |

| 22 | |

| 23 | This release delivers substantial upgrades, particularly in |

| 24 | |

| 25 | - **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision. |

| 26 | - **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead. |

| 27 | |

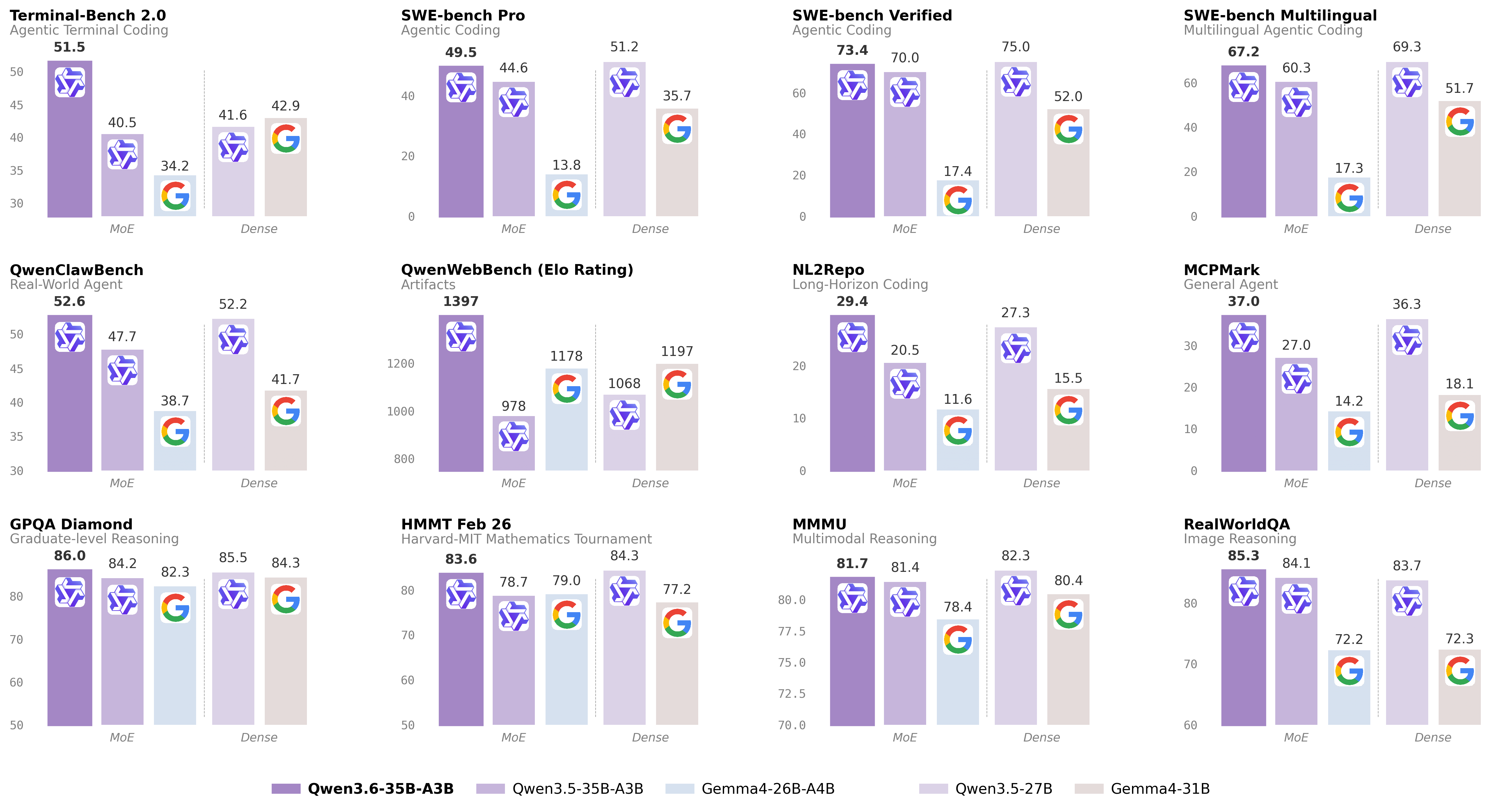

| 28 |  |

| 29 | |

| 30 | For more details, please refer to our blog post [Qwen3.6-35B-A3B](https://qwen.ai/blog?id=qwen3.6-35b-a3b). |

| 31 | |

| 32 | ## Model Overview |

| 33 | |

| 34 | - Type: Causal Language Model with Vision Encoder |

| 35 | - Training Stage: Pre-training & Post-training |

| 36 | - Language Model |

| 37 | - Number of Parameters: 35B in total and 3B activated |

| 38 | - Hidden Dimension: 2048 |

| 39 | - Token Embedding: 248320 (Padded) |

| 40 | - Number of Layers: 40 |

| 41 | - Hidden Layout: 10 × (3 × (Gated DeltaNet → MoE) → 1 × (Gated Attention → MoE)) |

| 42 | - Gated DeltaNet: |

| 43 | - Number of Linear Attention Heads: 32 for V and 16 for QK |

| 44 | - Head Dimension: 128 |

| 45 | - Gated Attention: |

| 46 | - Number of Attention Heads: 16 for Q and 2 for KV |

| 47 | - Head Dimension: 256 |

| 48 | - Rotary Position Embedding Dimension: 64 |

| 49 | - Mixture Of Experts |

| 50 | - Number of Experts: 256 |

| 51 | - Number of Activated Experts: 8 Routed + 1 Shared |

| 52 | - Expert Intermediate Dimension: 512 |

| 53 | - LM Output: 248320 (Padded) |

| 54 | - MTP: trained with multi-steps |

| 55 | - Context Length: 262,144 natively and extensible up to 1,010,000 tokens. |

| 56 | |

| 57 | |

| 58 | ## Benchmark Results |

| 59 | |

| 60 | ### Language |

| 61 | |

| 62 | <div style="font-family:-apple-system,BlinkMacSystemFont,'Segoe UI',Roboto,sans-serif;max-width:1000px;margin:0 auto;padding:16px 0"> |

| 63 | <table style="width:100%;border-collapse:collapse;font-size:13px"> |

| 64 | <thead><tr> |

| 65 | <th style="padding:10px 7px;text-align:left;font-weight:600;border-bottom:2px solid #7c3aed;color:#7c3aed"></th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Qwen3.5-27B</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Gemma4-31B</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Qwen3.5-35BA3B</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Gemma4-26BA4B</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Qwen3.6-35BA3B</th></tr></thead> |

| 66 | <tbody> |

| 67 | <tr><td colspan="6" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">Coding Agent</td></tr> |

| 68 | <tr> |

| 69 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">SWE-bench Verified</td> |

| 70 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">75.0</td> |

| 71 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">52.0</td> |

| 72 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">70.0</td> |

| 73 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">17.4</td> |

| 74 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">73.4</td> |

| 75 | </tr> |

| 76 | <tr> |

| 77 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">SWE-bench Multilingual</td> |

| 78 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">69.3</td> |

| 79 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">51.7</td> |

| 80 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">60.3</td> |

| 81 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">17.3</td> |

| 82 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">67.2</td> |

| 83 | </tr> |

| 84 | <tr> |

| 85 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">SWE-bench Pro</td> |

| 86 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">51.2</td> |

| 87 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">35.7</td> |

| 88 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">44.6</td> |

| 89 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">13.8</td> |

| 90 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">49.5</td> |

| 91 | </tr> |

| 92 | <tr> |

| 93 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">Terminal-Bench 2.0</td> |

| 94 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">41.6</td> |

| 95 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">42.9</td> |

| 96 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">40.5</td> |

| 97 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">34.2</td> |

| 98 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">51.5</td> |

| 99 | </tr> |

| 100 | <tr> |

| 101 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">Claw-Eval <sub><small>Avg</small></sub></td> |

| 102 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">64.3</td> |

| 103 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">48.5</td> |

| 104 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">65.4</td> |

| 105 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">58.8</td> |

| 106 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">68.7</td> |

| 107 | </tr> |

| 108 | <tr> |

| 109 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">Claw-Eval <sub><small>Pass^3</small></sub></td> |

| 110 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">46.2</td> |

| 111 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">25.0</td> |

| 112 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">51.0</td> |

| 113 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">28.0</td> |

| 114 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">50.0</td> |

| 115 | </tr> |

| 116 | <tr> |

| 117 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">SkillsBench <sub><small>Avg5</small></sub></td> |

| 118 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">27.2</td> |

| 119 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">23.6</td> |

| 120 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">4.4</td> |

| 121 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">12.3</td> |

| 122 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">28.7</td> |

| 123 | </tr> |

| 124 | <tr> |

| 125 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">QwenClawBench</td> |

| 126 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">52.2</td> |

| 127 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">41.7</td> |

| 128 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">47.7</td> |

| 129 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">38.7</td> |

| 130 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">52.6</td> |

| 131 | </tr> |

| 132 | <tr> |

| 133 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">NL2Repo</td> |

| 134 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">27.3</td> |

| 135 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">15.5</td> |

| 136 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">20.5</td> |

| 137 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">11.6</td> |

| 138 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">29.4</td> |

| 139 | </tr> |

| 140 | <tr> |

| 141 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">QwenWebBench</td> |

| 142 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">1068</td> |

| 143 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">1197</td> |

| 144 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">978</td> |

| 145 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">1178</td> |

| 146 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">1397</td> |

| 147 | </tr> |

| 148 | <tr><td colspan="6" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">General Agent</td></tr> |

| 149 | <tr> |

| 150 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">TAU3-Bench</td> |

| 151 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">68.4</td> |

| 152 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">67.5</td> |

| 153 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">68.9</td> |

| 154 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">59.0</td> |

| 155 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">67.2</td> |

| 156 | </tr> |

| 157 | <tr> |

| 158 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">VITA-Bench</td> |

| 159 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">41.8</td> |

| 160 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">43.0</td> |

| 161 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">29.1</td> |

| 162 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">36.9</td> |

| 163 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">35.6</td> |

| 164 | </tr> |

| 165 | <tr> |

| 166 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">DeepPlanning</td> |

| 167 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">22.6</td> |

| 168 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">24.0</td> |

| 169 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">22.8</td> |

| 170 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">16.2</td> |

| 171 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">25.9</td> |

| 172 | </tr> |

| 173 | <tr> |

| 174 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">Tool Decathlon</td> |

| 175 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">31.5</td> |

| 176 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">21.2</td> |

| 177 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">28.7</td> |

| 178 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">12.0</td> |

| 179 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">26.9</td> |

| 180 | </tr> |

| 181 | <tr> |

| 182 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MCPMark</td> |

| 183 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">36.3</td> |

| 184 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">18.1</td> |

| 185 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">27.0</td> |

| 186 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">14.2</td> |

| 187 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">37.0</td> |

| 188 | </tr> |

| 189 | <tr> |

| 190 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MCP-Atlas</td> |

| 191 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">68.4</td> |

| 192 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">57.2</td> |

| 193 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">62.4</td> |

| 194 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">50.0</td> |

| 195 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">62.8</td> |

| 196 | </tr> |

| 197 | <tr> |

| 198 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">WideSearch</td> |

| 199 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">66.4</td> |

| 200 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">35.2</td> |

| 201 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">59.1</td> |

| 202 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">38.3</td> |

| 203 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">60.1</td> |

| 204 | </tr> |

| 205 | <tr><td colspan="6" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">Knowledge</td></tr> |

| 206 | <tr> |

| 207 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MMLU-Pro</td> |

| 208 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">86.1</td> |

| 209 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">85.2</td> |

| 210 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">85.3</td> |

| 211 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.6</td> |

| 212 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">85.2</td> |

| 213 | </tr> |

| 214 | <tr> |

| 215 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MMLU-Redux</td> |

| 216 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">93.2</td> |

| 217 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">93.7</td> |

| 218 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">93.3</td> |

| 219 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.7</td> |

| 220 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">93.3</td> |

| 221 | </tr> |

| 222 | <tr> |

| 223 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">SuperGPQA</td> |

| 224 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">65.6</td> |

| 225 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">65.7</td> |

| 226 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">63.4</td> |

| 227 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">61.4</td> |

| 228 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">64.7</td> |

| 229 | </tr> |

| 230 | <tr> |

| 231 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">C-Eval</td> |

| 232 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">90.5</td> |

| 233 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.6</td> |

| 234 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">90.2</td> |

| 235 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.5</td> |

| 236 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">90.0</td> |

| 237 | </tr> |

| 238 | <tr><td colspan="6" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">STEM & Reasoning</td></tr> |

| 239 | <tr> |

| 240 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">GPQA</td> |

| 241 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">85.5</td> |

| 242 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">84.3</td> |

| 243 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">84.2</td> |

| 244 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.3</td> |

| 245 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">86.0</td> |

| 246 | </tr> |

| 247 | <tr> |

| 248 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">HLE</td> |

| 249 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">24.3</td> |

| 250 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">19.5</td> |

| 251 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">22.4</td> |

| 252 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">8.7</td> |

| 253 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">21.4</td> |

| 254 | </tr> |

| 255 | <tr> |

| 256 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">LiveCodeBench v6</td> |

| 257 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">80.7</td> |

| 258 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">80.0</td> |

| 259 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">74.6</td> |

| 260 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">77.1</td> |

| 261 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">80.4</td> |

| 262 | </tr> |

| 263 | <tr> |

| 264 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">HMMT Feb 25</td> |

| 265 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.0</td> |

| 266 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">88.7</td> |

| 267 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.0</td> |

| 268 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">91.7</td> |

| 269 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">90.7</td> |

| 270 | </tr> |

| 271 | <tr> |

| 272 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">HMMT Nov 25</td> |

| 273 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.8</td> |

| 274 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">87.5</td> |

| 275 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.2</td> |

| 276 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">87.5</td> |

| 277 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.1</td> |

| 278 | </tr> |

| 279 | <tr> |

| 280 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">HMMT Feb 26</td> |

| 281 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">84.3</td> |

| 282 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">77.2</td> |

| 283 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">78.7</td> |

| 284 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">79.0</td> |

| 285 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">83.6</td> |

| 286 | </tr> |

| 287 | <tr> |

| 288 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">IMOAnswerBench</td> |

| 289 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">79.9</td> |

| 290 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">74.5</td> |

| 291 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">76.8</td> |

| 292 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">74.3</td> |

| 293 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">78.9</td> |

| 294 | </tr> |

| 295 | <tr> |

| 296 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">AIME26 </td> |

| 297 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.6</td> |

| 298 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.2</td> |

| 299 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">91.0</td> |

| 300 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">88.3</td> |

| 301 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.7</td> |

| 302 | </tr> |

| 303 | </tbody> |

| 304 | </table> |

| 305 | |

| 306 | <p style="margin-top:12px;font-size:10px;opacity:0.7"> |

| 307 | * SWE-Bench Series: Internal agent scaffold (bash + file-edit tools); temp=1.0, top_p=0.95, 200K context window. We correct some problematic tasks in the public set of SWE-bench Pro and evaluate all baselines on the refined benchmark.<br/> |

| 308 | * Terminal-Bench 2.0: Harbor/Terminus-2 harness; 3h timeout, 32 CPU/48 GB RAM; temp=1.0, top_p=0.95, top_k=20, max_tokens=80K, 256K ctx; avg of 5 runs.<br/> |

| 309 | * SkillsBench: Evaluated via OpenCode on 78 tasks (self-contained subset, excluding API-dependent tasks); avg of 5 runs.<br/> |

| 310 | * NL2Repo: Others are evaluated via Claude Code (temp=1.0, top_p=0.95, max_turns=900).<br/> |

| 311 | * QwenClawBench: An internal real-user-distribution Claw agent benchmark (open-sourcing soon); temp=0.6, 256K ctx.<br/> |

| 312 | * QwenWebBench: An internal front-end code generation benchmark; bilingual (EN/CN), 7 categories (Web Design, Web Apps, Games, SVG, Data Visualization, Animation, and 3D); auto-render + multimodal judge (code/visual correctness); BT/Elo rating system.<br/> |

| 313 | * TAU3-Bench: We use the official user model (gpt-5.2, low reasoning effort) + default BM25 retrieval.<br/> |

| 314 | * VITA-Bench: Avg subdomain scores; using claude-4-sonnet as judger, as the official judger (claude-3.7-sonnet) is no longer available.<br/> |

| 315 | * MCPMark: GitHub MCP v0.30.3; Playwright responses truncated at 32K tokens.<br/> |

| 316 | * MCP-Atlas: Public set score; gemini-2.5-pro judger.<br/> |

| 317 | * AIME 26: We use the full AIME 2026 (I & II), where the scores may differ from Qwen 3.5 notes.<br/> |

| 318 | </p> |

| 319 | |

| 320 | </div> |

| 321 | |

| 322 | |

| 323 | ### Vision Language |

| 324 | |

| 325 | <div style="font-family:-apple-system,BlinkMacSystemFont,'Segoe UI',Roboto,sans-serif;max-width:1000px;margin:0 auto;padding:16px 0"> |

| 326 | <table style="width:100%;border-collapse:collapse;font-size:13px"> |

| 327 | <thead><tr> |

| 328 | <th style="padding:10px 7px;text-align:left;font-weight:600;border-bottom:2px solid #7c3aed;color:#7c3aed"></th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Qwen3.5-27B</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Claude-Sonnet-4.5</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Gemma4-31B</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Gemma4-26BA4B</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Qwen3.5-35B-A3B</th><th style="padding:10px 7px;text-align:center;font-weight:500;border-bottom:2px solid #7c3aed;color:#7c3aed;font-size: 14px;">Qwen3.6-35B-A3B</th></tr></thead> |

| 329 | <tbody> |

| 330 | <tr><td colspan="7" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">STEM and Puzzle</td></tr> |

| 331 | <tr> |

| 332 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MMMU</td> |

| 333 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.3</td> |

| 334 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">79.6</td> |

| 335 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">80.4</td> |

| 336 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">78.4</td> |

| 337 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">81.4</td> |

| 338 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">81.7</td> |

| 339 | </tr> |

| 340 | <tr> |

| 341 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MMMU-Pro</td> |

| 342 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">75.0</td> |

| 343 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">68.4</td> |

| 344 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">76.9*</td> |

| 345 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">73.8*</td> |

| 346 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">75.1</td> |

| 347 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">75.3</td> |

| 348 | </tr> |

| 349 | <tr> |

| 350 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">Mathvista(mini)</td> |

| 351 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">87.8</td> |

| 352 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">79.8</td> |

| 353 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">79.3</td> |

| 354 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">79.4</td> |

| 355 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">86.2</td> |

| 356 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">86.4</td> |

| 357 | </tr> |

| 358 | <tr> |

| 359 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">ZEROBench_sub</td> |

| 360 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">36.2</td> |

| 361 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">26.3</td> |

| 362 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">26.0</td> |

| 363 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">26.3</td> |

| 364 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">34.1</td> |

| 365 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">34.4</td> |

| 366 | </tr> |

| 367 | <tr><td colspan="7" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">General VQA</td></tr> |

| 368 | <tr> |

| 369 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">RealWorldQA</td> |

| 370 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">83.7</td> |

| 371 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">70.3</td> |

| 372 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">72.3</td> |

| 373 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">72.2</td> |

| 374 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">84.1</td> |

| 375 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">85.3</td> |

| 376 | </tr> |

| 377 | <tr> |

| 378 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MMBench<sub><small>EN-DEV-v1.1</small></sub></td> |

| 379 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.6</td> |

| 380 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">88.3</td> |

| 381 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">90.9</td> |

| 382 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.0</td> |

| 383 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">91.5</td> |

| 384 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.8</td> |

| 385 | </tr> |

| 386 | <tr> |

| 387 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">SimpleVQA</td> |

| 388 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">56.0</td> |

| 389 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">57.6</td> |

| 390 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">52.9</td> |

| 391 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">52.2</td> |

| 392 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">58.3</td> |

| 393 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">58.9</td> |

| 394 | </tr> |

| 395 | <tr> |

| 396 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">HallusionBench</td> |

| 397 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">70.0</td> |

| 398 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">59.9</td> |

| 399 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">67.4</td> |

| 400 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">66.1</td> |

| 401 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">67.9</td> |

| 402 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">69.8</td> |

| 403 | </tr> |

| 404 | <tr><td colspan="7" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">Text Recognition and Document Understanding</td></tr> |

| 405 | <tr> |

| 406 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">OmniDocBench1.5</td> |

| 407 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">88.9</td> |

| 408 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">85.8</td> |

| 409 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">80.1</td> |

| 410 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">74.4</td> |

| 411 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.3</td> |

| 412 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.9</td> |

| 413 | </tr> |

| 414 | <tr> |

| 415 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">CharXiv(RQ)</td> |

| 416 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">79.5</td> |

| 417 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">67.2</td> |

| 418 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">67.9</td> |

| 419 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">69.0</td> |

| 420 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">77.5</td> |

| 421 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">78.0</td> |

| 422 | </tr> |

| 423 | <tr> |

| 424 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">CC-OCR</td> |

| 425 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">81.0</td> |

| 426 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">68.1</td> |

| 427 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">75.7</td> |

| 428 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">74.5</td> |

| 429 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">80.7</td> |

| 430 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">81.9</td> |

| 431 | </tr> |

| 432 | <tr> |

| 433 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">AI2D_TEST</td> |

| 434 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.9</td> |

| 435 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">87.0</td> |

| 436 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.0</td> |

| 437 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">88.3</td> |

| 438 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.6</td> |

| 439 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.7</td> |

| 440 | </tr> |

| 441 | <tr><td colspan="7" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">Spatial Intelligence</td></tr> |

| 442 | <tr> |

| 443 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">RefCOCO(avg)</td> |

| 444 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">90.9</td> |

| 445 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 446 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 447 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 448 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">89.2</td> |

| 449 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">92.0</td> |

| 450 | </tr> |

| 451 | <tr> |

| 452 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">ODInW13</td> |

| 453 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">41.1</td> |

| 454 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 455 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 456 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 457 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">42.6</td> |

| 458 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">50.8</td> |

| 459 | </tr> |

| 460 | <tr> |

| 461 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">EmbSpatialBench</td> |

| 462 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">84.5</td> |

| 463 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">71.8</td> |

| 464 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 465 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 466 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">83.1</td> |

| 467 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">84.3</td> |

| 468 | </tr> |

| 469 | <tr> |

| 470 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">RefSpatialBench</td> |

| 471 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">67.7</td> |

| 472 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 473 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 474 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 475 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">63.5</td> |

| 476 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">64.3</td> |

| 477 | </tr> |

| 478 | <tr><td colspan="7" style="padding:8px 12px;font-weight:600;color:#7c3aed;border-bottom:1px solid rgba(124, 58, 237, 0.2);background:rgba(124, 58, 237, 0.1)">Video Understanding</td></tr> |

| 479 | <tr> |

| 480 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">VideoMME<sub><small>(w sub.)</sub></small></td> |

| 481 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">87.0</td> |

| 482 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">81.1</td> |

| 483 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 484 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 485 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">86.6</td> |

| 486 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">86.6</td> |

| 487 | </tr> |

| 488 | <tr> |

| 489 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">VideoMME<sub><small>(w/o sub.)</sub></small></td> |

| 490 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.8</td> |

| 491 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">75.3</td> |

| 492 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 493 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 494 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.5</td> |

| 495 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.5</td> |

| 496 | </tr> |

| 497 | <tr> |

| 498 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">VideoMMMU</td> |

| 499 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">82.3</td> |

| 500 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">77.6</td> |

| 501 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">81.6</td> |

| 502 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">76.0</td> |

| 503 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">80.4</td> |

| 504 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">83.7</td> |

| 505 | </tr> |

| 506 | <tr> |

| 507 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MLVU</td> |

| 508 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">85.9</td> |

| 509 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">72.8</td> |

| 510 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 511 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 512 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">85.6</td> |

| 513 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">86.2</td> |

| 514 | </tr> |

| 515 | <tr> |

| 516 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">MVBench</td> |

| 517 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">74.6</td> |

| 518 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 519 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 520 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 521 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">74.8</td> |

| 522 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">74.6</td> |

| 523 | </tr> |

| 524 | <tr> |

| 525 | <td style="padding:7px 7px;padding-left:20px;border-bottom:1px solid rgba(128, 128, 128, 0.15);">LVBench</td> |

| 526 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">73.6</td> |

| 527 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 528 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 529 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">--</td> |

| 530 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">71.4</td> |

| 531 | <td style="padding:7px 7px;text-align:center;border-bottom:1px solid rgba(128, 128, 128, 0.15)">71.4</td> |

| 532 | </tr> |

| 533 | </tbody> |

| 534 | </table> |

| 535 | <p style="margin-top:12px;font-size:10px;opacity:0.7"> |

| 536 | * Empty cells (--) indicate scores not available or not applicable. |

| 537 | </p> |

| 538 | </div> |

| 539 | |

| 540 | ## Quickstart |

| 541 | |

| 542 | For streamlined integration, we recommend using Qwen3.6 via APIs. Below is a guide to use Qwen3.6 via OpenAI-compatible API. |

| 543 | |

| 544 | ### Serving Qwen3.6 |

| 545 | |

| 546 | Qwen3.6 can be served via APIs with popular inference frameworks. |

| 547 | In the following, we show example commands to launch OpenAI-Compatible API servers for Qwen3.6 models. |

| 548 | |

| 549 | > [!Important] |

| 550 | > Inference efficiency and throughput vary significantly across frameworks. |

| 551 | > We recommend using the latest framework versions to ensure optimal performance and compatibility. |

| 552 | > For production workloads or high-throughput scenarios, dedicated serving engines such as SGLang, KTransformers or vLLM are strongly recommended. |

| 553 | |

| 554 | > [!Important] |

| 555 | > The model has a default context length of 262,144 tokens. |

| 556 | > If you encounter out-of-memory (OOM) errors, consider reducing the context window. |

| 557 | > However, because Qwen3.6 leverages extended context for complex tasks, we advise maintaining a context length of at least 128K tokens to preserve thinking capabilities. |

| 558 | |

| 559 | #### SGLang |

| 560 | |

| 561 | [SGLang](https://github.com/sgl-project/sglang) is a fast serving framework for large language models and vision language models. |

| 562 | `sglang>=0.5.10` is recommended for Qwen3.6, which can be installed using the following command in a fresh environment: |

| 563 | ```shell |

| 564 | uv pip install sglang[all] |

| 565 | ``` |

| 566 | See [its documentation](https://docs.sglang.ai/get_started/install.html) for more details. |

| 567 | |

| 568 | The following will create API endpoints at `http://localhost:8000/v1`: |

| 569 | |

| 570 | - **Standard Version**: The following command can be used to create an API endpoint with maximum context length 262,144 tokens using tensor parallel on 8 GPUs. |

| 571 | |

| 572 | ```shell |

| 573 | python -m sglang.launch_server --model-path Qwen/Qwen3.6-35B-A3B --port 8000 --tp-size 8 --mem-fraction-static 0.8 --context-length 262144 --reasoning-parser qwen3 |

| 574 | ``` |

| 575 | |

| 576 | - **Tool Use**: To support tool use, you can use the following command. |

| 577 | |

| 578 | ```shell |

| 579 | python -m sglang.launch_server --model-path Qwen/Qwen3.6-35B-A3B --port 8000 --tp-size 8 --mem-fraction-static 0.8 --context-length 262144 --reasoning-parser qwen3 --tool-call-parser qwen3_coder |

| 580 | ``` |

| 581 | |

| 582 | - **Multi-Token Prediction (MTP)**: The following command is recommended for MTP: |

| 583 | |

| 584 | ```shell |

| 585 | python -m sglang.launch_server --model-path Qwen/Qwen3.6-35B-A3B --port 8000 --tp-size 8 --mem-fraction-static 0.8 --context-length 262144 --reasoning-parser qwen3 --speculative-algo NEXTN --speculative-num-steps 3 --speculative-eagle-topk 1 --speculative-num-draft-tokens 4 |

| 586 | ``` |

| 587 | |

| 588 | For detailed deployment guide, see the [SGLang Qwen3.5 Cookbook](https://lmsysorg.mintlify.app/cookbook/llm/Qwen/Qwen3.5). |

| 589 | |

| 590 | #### vLLM |

| 591 | |

| 592 | [vLLM](https://github.com/vllm-project/vllm) is a high-throughput and memory-efficient inference and serving engine for LLMs. |

| 593 | `vllm>=0.19.0` is recommended for Qwen3.6, which can be installed using the following command in a fresh environment: |

| 594 | ```shell |

| 595 | uv pip install vllm --torch-backend=auto |

| 596 | ``` |

| 597 | See [its documentation](https://docs.vllm.ai/en/stable/getting_started/installation/index.html) for more details. |

| 598 | |

| 599 | |

| 600 | The following will create API endpoints at `http://localhost:8000/v1`: |

| 601 | |

| 602 | - **Standard Version**: The following command can be used to create an API endpoint with maximum context length 262,144 tokens using tensor parallel on 8 GPUs. |

| 603 | |

| 604 | ```shell |

| 605 | vllm serve Qwen/Qwen3.6-35B-A3B --port 8000 --tensor-parallel-size 8 --max-model-len 262144 --reasoning-parser qwen3 |

| 606 | ``` |

| 607 | |

| 608 | - **Tool Call**: To support tool use, you can use the following command. |

| 609 | |

| 610 | ```shell |

| 611 | vllm serve Qwen/Qwen3.6-35B-A3B --port 8000 --tensor-parallel-size 8 --max-model-len 262144 --reasoning-parser qwen3 --enable-auto-tool-choice --tool-call-parser qwen3_coder |

| 612 | ``` |

| 613 | |

| 614 | - **Multi-Token Prediction (MTP)**: The following command is recommended for MTP: |

| 615 | |

| 616 | ```shell |

| 617 | vllm serve Qwen/Qwen3.6-35B-A3B --port 8000 --tensor-parallel-size 8 --max-model-len 262144 --reasoning-parser qwen3 --speculative-config '{"method":"qwen3_next_mtp","num_speculative_tokens":2}' |

| 618 | ``` |

| 619 | |

| 620 | - **Text-Only**: The following command skips the vision encoder and multimodal profiling to free up memory for additional KV cache: |

| 621 | |

| 622 | ```shell |

| 623 | vllm serve Qwen/Qwen3.6-35B-A3B --port 8000 --tensor-parallel-size 8 --max-model-len 262144 --reasoning-parser qwen3 --language-model-only |

| 624 | ``` |

| 625 | |

| 626 | For detailed deployment guide, see the [vLLM Qwen3.5 Recipe](https://docs.vllm.ai/projects/recipes/en/latest/Qwen/Qwen3.5.html). |

| 627 | |

| 628 | #### KTransformers |

| 629 | |

| 630 | [KTransformers](https://github.com/kvcache-ai/ktransformers) is a flexible framework for experiencing cutting-edge LLM inference optimizations with CPU-GPU heterogeneous computing. |

| 631 | For running Qwen3.6 with KTransformers, see the [KTransformers Deployment Guide](https://github.com/kvcache-ai/ktransformers/blob/main/doc/en/Qwen3.5.md). |

| 632 | |

| 633 | #### Hugging Face Transformers |

| 634 | |

| 635 | Hugging Face Transformers contains a _lightweight_ server which can be used for quick testing and moderate load deployment. |

| 636 | The latest `transformers` is required for Qwen3.6: |

| 637 | ```shell |

| 638 | pip install "transformers[serving]" |

| 639 | ``` |

| 640 | See [its documentation](https://huggingface.co/docs/transformers/main/serving) for more details. Please also make sure torchvision and pillow are installed. |

| 641 | |

| 642 | Then, run `transformers serve` to launch a server with API endpoints at `http://localhost:8000/v1`; it will place the model on accelerators if available: |

| 643 | ```shell |

| 644 | transformers serve Qwen/Qwen3.6-35B-A3B --port 8000 --continuous-batching |

| 645 | ``` |

| 646 | |

| 647 | ### Using Qwen3.6 via the Chat Completions API |

| 648 | |

| 649 | The chat completions API is accessible via standard HTTP requests or OpenAI SDKs. |

| 650 | Here, we show examples using the OpenAI Python SDK. |

| 651 | |

| 652 | Before starting, make sure it is installed and the API key and the API base URL is configured, e.g.: |

| 653 | ```shell |

| 654 | pip install -U openai |

| 655 | |

| 656 | # Set the following accordingly |

| 657 | export OPENAI_BASE_URL="http://localhost:8000/v1" |

| 658 | export OPENAI_API_KEY="EMPTY" |

| 659 | ``` |

| 660 | |

| 661 | > [!Tip] |

| 662 | > We recommend using the following set of sampling parameters for generation |

| 663 | > - Thinking mode for general tasks: `temperature=1.0, top_p=0.95, top_k=20, min_p=0.0, presence_penalty=1.5, repetition_penalty=1.0` |

| 664 | > - Thinking mode for precise coding tasks (e.g. WebDev): `temperature=0.6, top_p=0.95, top_k=20, min_p=0.0, presence_penalty=0.0, repetition_penalty=1.0` |

| 665 | > - Instruct (or non-thinking) mode: `temperature=0.7, top_p=0.80, top_k=20, min_p=0.0, presence_penalty=1.5, repetition_penalty=1.0` |

| 666 | > |

| 667 | > Please note that the support for sampling parameters varies according to inference frameworks. |

| 668 | |

| 669 | > [!Important] |

| 670 | > Qwen3.6 models operate in thinking mode by default, generating thinking content signified by `<think>\n...</think>\n\n` before producing the final responses. |

| 671 | > To disable thinking content and obtain direct response, refer to the examples [here](#instruct-or-non-thinking-mode). |

| 672 | |

| 673 | |

| 674 | #### Text-Only Input |

| 675 | |

| 676 | ```python |

| 677 | from openai import OpenAI |

| 678 | # Configured by environment variables |

| 679 | client = OpenAI() |

| 680 | |

| 681 | messages = [ |

| 682 | {"role": "user", "content": "Type \"I love Qwen3.6\" backwards"}, |

| 683 | ] |

| 684 | |

| 685 | chat_response = client.chat.completions.create( |

| 686 | model="Qwen/Qwen3.6-35B-A3B", |

| 687 | messages=messages, |

| 688 | max_tokens=81920, |

| 689 | temperature=1.0, |

| 690 | top_p=0.95, |

| 691 | presence_penalty=1.5, |

| 692 | extra_body={ |

| 693 | "top_k": 20, |

| 694 | }, |

| 695 | ) |

| 696 | print("Chat response:", chat_response) |

| 697 | ``` |

| 698 | |

| 699 | |

| 700 | #### Image Input |

| 701 | |

| 702 | ```python |

| 703 | from openai import OpenAI |

| 704 | # Configured by environment variables |

| 705 | client = OpenAI() |

| 706 | |

| 707 | messages = [ |

| 708 | { |

| 709 | "role": "user", |

| 710 | "content": [ |

| 711 | { |

| 712 | "type": "image_url", |

| 713 | "image_url": { |

| 714 | "url": "https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3.5/demo/CI_Demo/mathv-1327.jpg" |

| 715 | } |

| 716 | }, |

| 717 | { |

| 718 | "type": "text", |

| 719 | "text": "The centres of the four illustrated circles are in the corners of the square. The two big circles touch each other and also the two little circles. With which factor do you have to multiply the radii of the little circles to obtain the radius of the big circles?\nChoices:\n(A) $\\frac{2}{9}$\n(B) $\\sqrt{5}$\n(C) $0.8 \\cdot \\pi$\n(D) 2.5\n(E) $1+\\sqrt{2}$" |

| 720 | } |

| 721 | ] |

| 722 | } |

| 723 | ] |

| 724 | |

| 725 | chat_response = client.chat.completions.create( |

| 726 | model="Qwen/Qwen3.6-35B-A3B", |

| 727 | messages=messages, |

| 728 | max_tokens=81920, |

| 729 | temperature=1.0, |

| 730 | top_p=0.95, |

| 731 | presence_penalty=1.5, |

| 732 | extra_body={ |

| 733 | "top_k": 20, |

| 734 | }, |

| 735 | ) |

| 736 | print("Chat response:", chat_response) |

| 737 | ``` |

| 738 | |

| 739 | #### Video Input |

| 740 | |

| 741 | ```python |

| 742 | from openai import OpenAI |

| 743 | # Configured by environment variables |

| 744 | client = OpenAI() |

| 745 | |

| 746 | messages = [ |

| 747 | { |

| 748 | "role": "user", |

| 749 | "content": [ |

| 750 | { |

| 751 | "type": "video_url", |

| 752 | "video_url": { |

| 753 | "url": "https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3.5/demo/video/N1cdUjctpG8.mp4" |

| 754 | } |

| 755 | }, |

| 756 | { |

| 757 | "type": "text", |

| 758 | "text": "How many porcelain jars were discovered in the niches located in the primary chamber of the tomb?" |

| 759 | } |

| 760 | ] |

| 761 | } |

| 762 | ] |

| 763 | |

| 764 | # When vLLM is launched with `--media-io-kwargs '{"video": {"num_frames": -1}}'`, |

| 765 | # video frame sampling can be configured via `extra_body` (e.g., by setting `fps`). |

| 766 | # This feature is currently supported only in vLLM. |

| 767 | # |

| 768 | # By default, `fps=2` and `do_sample_frames=True`. |

| 769 | # With `do_sample_frames=True`, you can customize the `fps` value to set your desired video sampling rate. |

| 770 | chat_response = client.chat.completions.create( |

| 771 | model="Qwen/Qwen3.6-35B-A3B", |

| 772 | messages=messages, |

| 773 | max_tokens=81920, |

| 774 | temperature=1.0, |

| 775 | top_p=0.95, |

| 776 | presence_penalty=1.5, |

| 777 | extra_body={ |

| 778 | "top_k": 20, |

| 779 | "mm_processor_kwargs": {"fps": 2, "do_sample_frames": True}, |

| 780 | }, |

| 781 | ) |

| 782 | |

| 783 | print("Chat response:", chat_response) |

| 784 | ``` |

| 785 | |

| 786 | |

| 787 | #### Instruct (or Non-Thinking) Mode |

| 788 | |

| 789 | > [!Important] |

| 790 | > Qwen3.6 does not officially support the soft switch of Qwen3, i.e., `/think` and `/nothink`. |

| 791 | |

| 792 | Qwen3.6 will think by default before response. |

| 793 | You can obtain direct response from the model without thinking by configuring the API parameters. |

| 794 | For example, |

| 795 | ```python |

| 796 | from openai import OpenAI |

| 797 | # Configured by environment variables |

| 798 | client = OpenAI() |

| 799 | |

| 800 | messages = [ |

| 801 | { |

| 802 | "role": "user", |

| 803 | "content": [ |

| 804 | { |

| 805 | "type": "image_url", |

| 806 | "image_url": { |

| 807 | "url": "https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3.6/demo/RealWorld/RealWorld-04.png" |

| 808 | } |

| 809 | }, |

| 810 | { |

| 811 | "type": "text", |

| 812 | "text": "Where is this?" |

| 813 | } |

| 814 | ] |

| 815 | } |

| 816 | ] |

| 817 | |

| 818 | chat_response = client.chat.completions.create( |

| 819 | model="Qwen/Qwen3.6-35B-A3B", |

| 820 | messages=messages, |

| 821 | max_tokens=32768, |

| 822 | temperature=0.7, |

| 823 | top_p=0.8, |

| 824 | presence_penalty=1.5, |

| 825 | extra_body={ |

| 826 | "top_k": 20, |

| 827 | "chat_template_kwargs": {"enable_thinking": False}, |

| 828 | }, |

| 829 | ) |

| 830 | print("Chat response:", chat_response) |

| 831 | ``` |

| 832 | |

| 833 | > [!Note] |

| 834 | > If you are using APIs from Alibaba Cloud Model Studio, in addition to changing `model`, please use `"enable_thinking": False` instead of `"chat_template_kwargs": {"enable_thinking": False}`. |

| 835 | |

| 836 | #### Preserve Thinking |

| 837 | |

| 838 | By default, only the thinking blocks generated in handling the latest user message is retained, resulting in a pattern commonly as interleaved thinking. |

| 839 | Qwen3.6 has been additionally trained to preserve and leverage thinking traces from historical messages. |

| 840 | You can enable this behavior by setting the `preserve_thinking` option: |

| 841 | ```python |

| 842 | from openai import OpenAI |

| 843 | # Configured by environment variables |

| 844 | client = OpenAI() |

| 845 | |

| 846 | messages = [...] |

| 847 | |

| 848 | chat_response = client.chat.completions.create( |

| 849 | model="Qwen/Qwen3.6-35B-A3B", |

| 850 | messages=messages, |

| 851 | max_tokens=32768, |

| 852 | temperature=0.7, |

| 853 | top_p=0.8, |

| 854 | presence_penalty=1.5, |

| 855 | extra_body={ |

| 856 | "top_k": 20, |

| 857 | "chat_template_kwargs": {"preserve_thinking": True}, |

| 858 | }, |

| 859 | ) |

| 860 | print("Chat response:", chat_response) |

| 861 | ``` |

| 862 | |

| 863 | > [!Note] |

| 864 | > If you are using APIs from Alibaba Cloud Model Studio, in addition to changing `model`, please use `"preserve_thinking": True` instead of `"chat_template_kwargs": {"preserve_thinking": False}`. |

| 865 | |

| 866 | |

| 867 | This capability is particularly beneficial for agent scenarios, where maintaining full reasoning context can enhance decision consistency and, in many cases, reduce overall token consumption by minimizing redundant reasoning. Additionally, it can improve KV cache utilization, optimizing inference efficiency in both thinking and non-thinking modes. |

| 868 | |

| 869 | |

| 870 | ## Agentic Usage |

| 871 | |

| 872 | Qwen3.6 excels in tool calling capabilities. |

| 873 | |

| 874 | ### Qwen-Agent |

| 875 | |

| 876 | We recommend using [Qwen-Agent](https://github.com/QwenLM/Qwen-Agent) to quickly build Agent applications with Qwen3.6. |

| 877 | |

| 878 | To define the available tools, you can use the MCP configuration file, use the integrated tool of Qwen-Agent, or integrate other tools by yourself. |

| 879 | ```python |

| 880 | import os |

| 881 | from qwen_agent.agents import Assistant |

| 882 | |

| 883 | # Define LLM |

| 884 | # Using Alibaba Cloud Model Studio |

| 885 | llm_cfg = { |

| 886 | # Use the OpenAI-compatible model service provided by DashScope: |

| 887 | 'model': 'Qwen3.6-35B-A3B', |

| 888 | 'model_type': 'qwenvl_oai', |

| 889 | 'model_server': 'https://dashscope.aliyuncs.com/compatible-mode/v1', |

| 890 | 'api_key': os.getenv('DASHSCOPE_API_KEY'), |

| 891 | |

| 892 | 'generate_cfg': { |

| 893 | 'use_raw_api': True, |

| 894 | # When using Dash Scope OAI API, pass the parameter of whether to enable thinking mode in this way |

| 895 | 'extra_body': { |

| 896 | 'enable_thinking': True, |

| 897 | 'preserve_thinking': True, |

| 898 | }, |

| 899 | }, |

| 900 | } |

| 901 | |

| 902 | # Using OpenAI-compatible API endpoint. |

| 903 | # functionality of the deployment frameworks and let Qwen-Agent automate the related operations. |

| 904 | # |

| 905 | # llm_cfg = { |

| 906 | # # Use your own model service compatible with OpenAI API by vLLM/SGLang: |

| 907 | # 'model': 'Qwen/Qwen3.6-35B-A3B', |

| 908 | # 'model_type': 'qwenvl_oai', |

| 909 | # 'model_server': 'http://localhost:8000/v1', # api_base |

| 910 | # 'api_key': 'EMPTY', |

| 911 | # |

| 912 | # 'generate_cfg': { |

| 913 | # 'use_raw_api': True, |

| 914 | # # When using vLLM/SGLang OAI API, pass the parameter of whether to enable thinking mode in this way |

| 915 | # 'extra_body': { |

| 916 | # 'chat_template_kwargs': {'enable_thinking': True, 'preserve_thinking': True} |

| 917 | # }, |

| 918 | # }, |

| 919 | # } |

| 920 | |

| 921 | # Define Tools |

| 922 | tools = [ |

| 923 | {'mcpServers': { # You can specify the MCP configuration file |

| 924 | "filesystem": { |

| 925 | "command": "npx", |

| 926 | "args": ["-y", "@modelcontextprotocol/server-filesystem", "/Users/xxxx/Desktop"] |

| 927 | } |

| 928 | } |

| 929 | } |

| 930 | ] |

| 931 | |

| 932 | # Define Agent |

| 933 | bot = Assistant(llm=llm_cfg, function_list=tools) |

| 934 | |

| 935 | # Streaming generation |

| 936 | messages = [{'role': 'user', 'content': 'Help me organize my desktop.'}] |

| 937 | for responses in bot.run(messages=messages): |

| 938 | pass |

| 939 | print(responses) |

| 940 | |

| 941 | # Streaming generation |

| 942 | messages = [{'role': 'user', 'content': 'Develop a dog website and save it on the desktop'}] |

| 943 | for responses in bot.run(messages=messages): |

| 944 | pass |

| 945 | print(responses) |

| 946 | ``` |

| 947 | |

| 948 | ### Qwen Code |

| 949 | |

| 950 | |

| 951 | [Qwen Code](https://github.com/QwenLM/qwen-code) is an open-source AI agent for the terminal, optimized for Qwen models. It helps you understand large codebases, automate tedious work, and ship faster. |

| 952 | |

| 953 | For more information, please refer to [Qwen Code](https://qwenlm.github.io/qwen-code-docs/). |

| 954 | |

| 955 | ## Processing Ultra-Long Texts |

| 956 | |

| 957 | Qwen3.6 natively supports context lengths of up to 262,144 tokens. |

| 958 | For long-horizon tasks where the total length (including both input and output) exceeds this limit, we recommend using RoPE scaling techniques to handle long texts effectively., e.g., YaRN. |

| 959 | |

| 960 | YaRN is currently supported by several inference frameworks, e.g., `transformers`, `vllm`, `ktransformers` and `sglang`. |

| 961 | In general, there are two approaches to enabling YaRN for supported frameworks: |

| 962 | |

| 963 | - Modifying the model configuration file: |

| 964 | In the `config.json` file, change the `rope_parameters` fields in `text_config` to: |

| 965 | ```json |

| 966 | { |

| 967 | "mrope_interleaved": true, |

| 968 | "mrope_section": [ |

| 969 | 11, |

| 970 | 11, |

| 971 | 10 |

| 972 | ], |

| 973 | "rope_type": "yarn", |

| 974 | "rope_theta": 10000000, |

| 975 | "partial_rotary_factor": 0.25, |

| 976 | "factor": 4.0, |

| 977 | "original_max_position_embeddings": 262144, |

| 978 | } |

| 979 | ``` |

| 980 | |

| 981 | - Passing command line arguments: |

| 982 | |

| 983 | For `vllm`, you can use |

| 984 | ```shell |

| 985 | VLLM_ALLOW_LONG_MAX_MODEL_LEN=1 vllm serve ... --hf-overrides '{"text_config": {"rope_parameters": {"mrope_interleaved": true, "mrope_section": [11, 11, 10], "rope_type": "yarn", "rope_theta": 10000000, "partial_rotary_factor": 0.25, "factor": 4.0, "original_max_position_embeddings": 262144}}}' --max-model-len 1010000 |

| 986 | ``` |

| 987 | |

| 988 | For `sglang` and `ktransformers`, you can use |

| 989 | ```shell |

| 990 | SGLANG_ALLOW_OVERWRITE_LONGER_CONTEXT_LEN=1 python -m sglang.launch_server ... --json-model-override-args '{"text_config": {"rope_parameters": {"mrope_interleaved": true, "mrope_section": [11, 11, 10], "rope_type": "yarn", "rope_theta": 10000000, "partial_rotary_factor": 0.25, "factor": 4.0, "original_max_position_embeddings": 262144}}}' --context-length 1010000 |

| 991 | ``` |

| 992 | |

| 993 | > [!NOTE] |

| 994 | > All the notable open-source frameworks implement static YaRN, which means the scaling factor remains constant regardless of input length, **potentially impacting performance on shorter texts.** |

| 995 | > We advise modifying the `rope_parameters` configuration only when processing long contexts is required. |

| 996 | > It is also recommended to modify the `factor` as needed. For example, if the typical context length for your application is 524,288 tokens, it would be better to set `factor` as 2.0. |

| 997 | |

| 998 | ## Best Practices |

| 999 | |

| 1000 | To achieve optimal performance, we recommend the following settings: |

| 1001 | |

| 1002 | 1. **Sampling Parameters**: |

| 1003 | - We suggest using the following sets of sampling parameters depending on the mode and task type: |

| 1004 | - **Thinking mode for general tasks**: |

| 1005 | `temperature=1.0`, `top_p=0.95`, `top_k=20`, `min_p=0.0`, `presence_penalty=1.5`, `repetition_penalty=1.0` |

| 1006 | - **Thinking mode for precise coding tasks (e.g., WebDev)**: |

| 1007 | `temperature=0.6`, `top_p=0.95`, `top_k=20`, `min_p=0.0`, `presence_penalty=0.0`, `repetition_penalty=1.0` |

| 1008 | - **Instruct (or non-thinking) mode**: |

| 1009 | `temperature=0.7`, `top_p=0.80`, `top_k=20`, `min_p=0.0`, `presence_penalty=1.5`, `repetition_penalty=1.0` |

| 1010 | - For supported frameworks, you can adjust the `presence_penalty` parameter between 0 and 2 to reduce endless repetitions. However, using a higher value may occasionally result in language mixing and a slight decrease in model performance. |

| 1011 | |

| 1012 | 2. **Adequate Output Length**: We recommend using an output length of 32,768 tokens for most queries. For benchmarking on highly complex problems, such as those found in math and programming competitions, we suggest setting the max output length to 81,920 tokens. This provides the model with sufficient space to generate detailed and comprehensive responses, thereby enhancing its overall performance. |

| 1013 | |

| 1014 | 3. **Standardize Output Format**: We recommend using prompts to standardize model outputs when benchmarking. |

| 1015 | - **Math Problems**: Include "Please reason step by step, and put your final answer within \boxed{}." in the prompt. |

| 1016 | - **Multiple-Choice Questions**: Add the following JSON structure to the prompt to standardize responses: "Please show your choice in the `answer` field with only the choice letter, e.g., `"answer": "C"`." |

| 1017 | |

| 1018 | 4. **Long Video Understanding**: To optimize inference efficiency for plain text and images, the `size` parameter in the released `video_preprocessor_config.json` is conservatively configured. It is recommended to set the `longest_edge` parameter in the video_preprocessor_config file to 469,762,048 (corresponding to 224k video tokens) to enable higher frame-rate sampling for hour-scale videos and thereby achieve superior performance. For example, |

| 1019 | ```json |

| 1020 | {"longest_edge": 469762048, "shortest_edge": 4096} |

| 1021 | ``` |

| 1022 | |

| 1023 | Alternatively, override the default values via engine startup parameters. For implementation details, refer to: [vLLM](https://github.com/vllm-project/vllm/pull/34330) / [SGLang](https://github.com/sgl-project/sglang/pull/18467). |

| 1024 | |

| 1025 | |

| 1026 | ### Citation |

| 1027 | |

| 1028 | If you find our work helpful, feel free to give us a cite. |

| 1029 | |

| 1030 | ```bibtex |

| 1031 | @misc{qwen36_35b_a3b, |

| 1032 | title = {{Qwen3.6-35B-A3B}: Agentic Coding Power, Now Open to All}, |

| 1033 | url = {https://qwen.ai/blog?id=qwen3.6-35b-a3b}, |

| 1034 | author = {{Qwen Team}}, |

| 1035 | month = {April}, |

| 1036 | year = {2026} |

| 1037 | } |

| 1038 | ``` |

| 1039 | |

| 1040 | |